If someone searches for “SkyReels V4 review,” they are usually past the curiosity stage. They are no longer asking whether AI video exists. They are asking whether this specific product is worth their time, their testing budget, and eventually their money. That means a useful review page cannot behave like a product landing page with a different title. It has to answer harder questions: what kind of workflow SkyReels V4 actually supports, where the positioning feels credible, where the proof is still thin, who should try it first, and what kind of buyer should wait for more evidence before committing.

That is the lens of this review. This page is not trying to replace the SkyReels V4 product page, which should carry direct product intent. It is not trying to replace the prompt guide, which should answer tutorial questions. It is not trying to replace pricing, which should own commercial intent. Instead, this review is the page where a user asks, “Does this look like a serious AI video workflow, or just another thin launch site?” and expects an evidence-based answer.

TL;DR

SkyReels V4 has a stronger strategic framing than many AI tool launches because SkyReels V4 is positioned around a workflow, not just a one-shot generator. The SkyReels V4 product story centers on:

- SkyReels V4 multimodal input

- SkyReels V4 joint audio and video generation

- SkyReels V4 unified generation, repair, and editing tasks

- a more directed SkyReels V4 creative workflow rather than a random demo box

That is the good news. The SkyReels V4 caution is that the site still needs much more proof to fully earn those claims. The current SkyReels V4 implementation already gives users a usable generator surface and a clearer site architecture than many thin competitors, but a high-conviction SkyReels V4 review page needs screenshots, prompt examples, output notes, and repeatable workflow evidence.

My current verdict is:

- Positioning quality: strong

- Site architecture quality: strong

- Workflow promise: promising

- Evidence depth: not yet strong enough

- Commercial readiness: good foundation, but credibility still depends on proof content

If you want SkyReels V4 product intent, start at SkyReels V4. If you want usage method, go to the SkyReels V4 prompt guide. If you want SkyReels V4 buying context, go to pricing. If you want an alternative framing, compare SkyReels V4 vs Sora here.

What this review is actually evaluating

The biggest mistake in AI tool reviews is pretending to evaluate everything at once. That creates vague verdicts that are not useful for users and not useful for SEO. For SkyReels V4, the right evaluation scope is narrower and more practical:

- Does the product story make sense?

- Does the current workflow support that story?

- Does the site structure match real search intent?

- Does the current implementation create buying confidence or hesitation?

- What proof still needs to be added to make this page trustworthy?

This matters because SkyReels V4 is not being introduced as a generic “AI video maker.” SkyReels V4 is being framed as a video foundation model with multimodal inputs and a broader SkyReels V4 workflow that includes generation, repair, and editing. Those are stronger SkyReels V4 claims than “turn text into video.” Stronger claims can attract better SkyReels V4 search traffic, but they also raise the burden of proof.

In other words, SkyReels V4 should not be judged only on whether it has a text box and an upload button. SkyReels V4 should be judged on whether the product and the site together support a believable creative SkyReels V4 workflow.

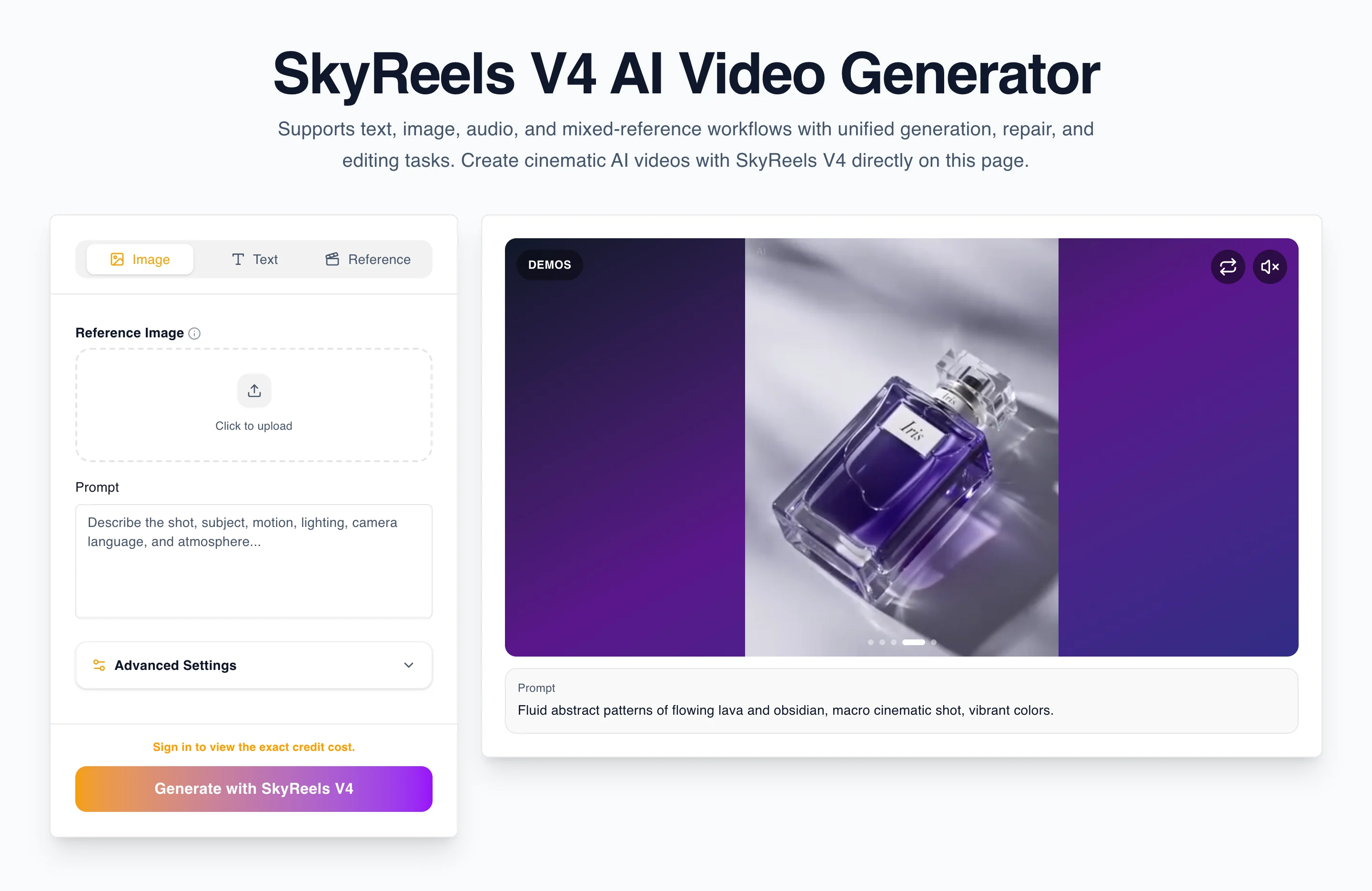

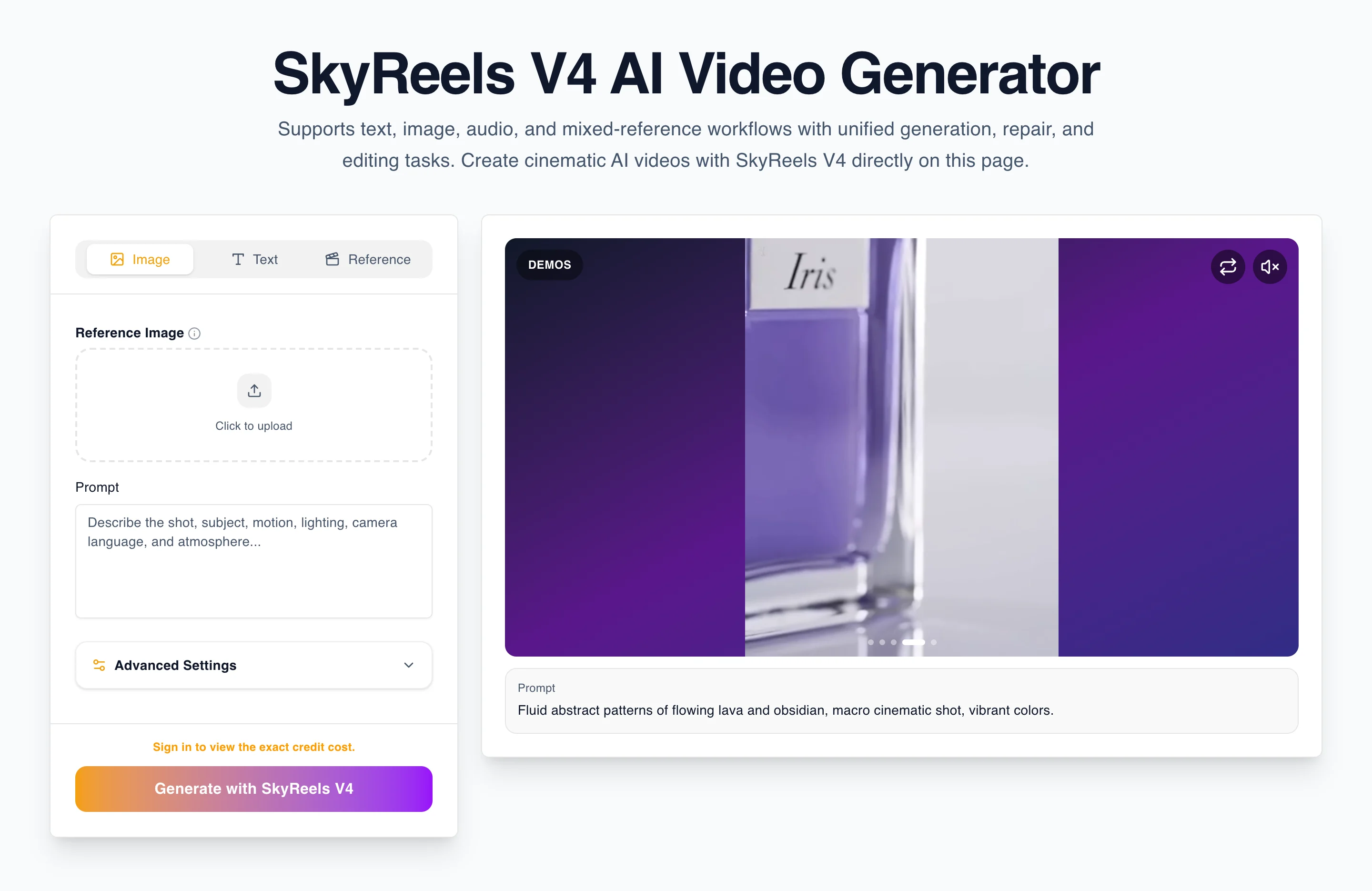

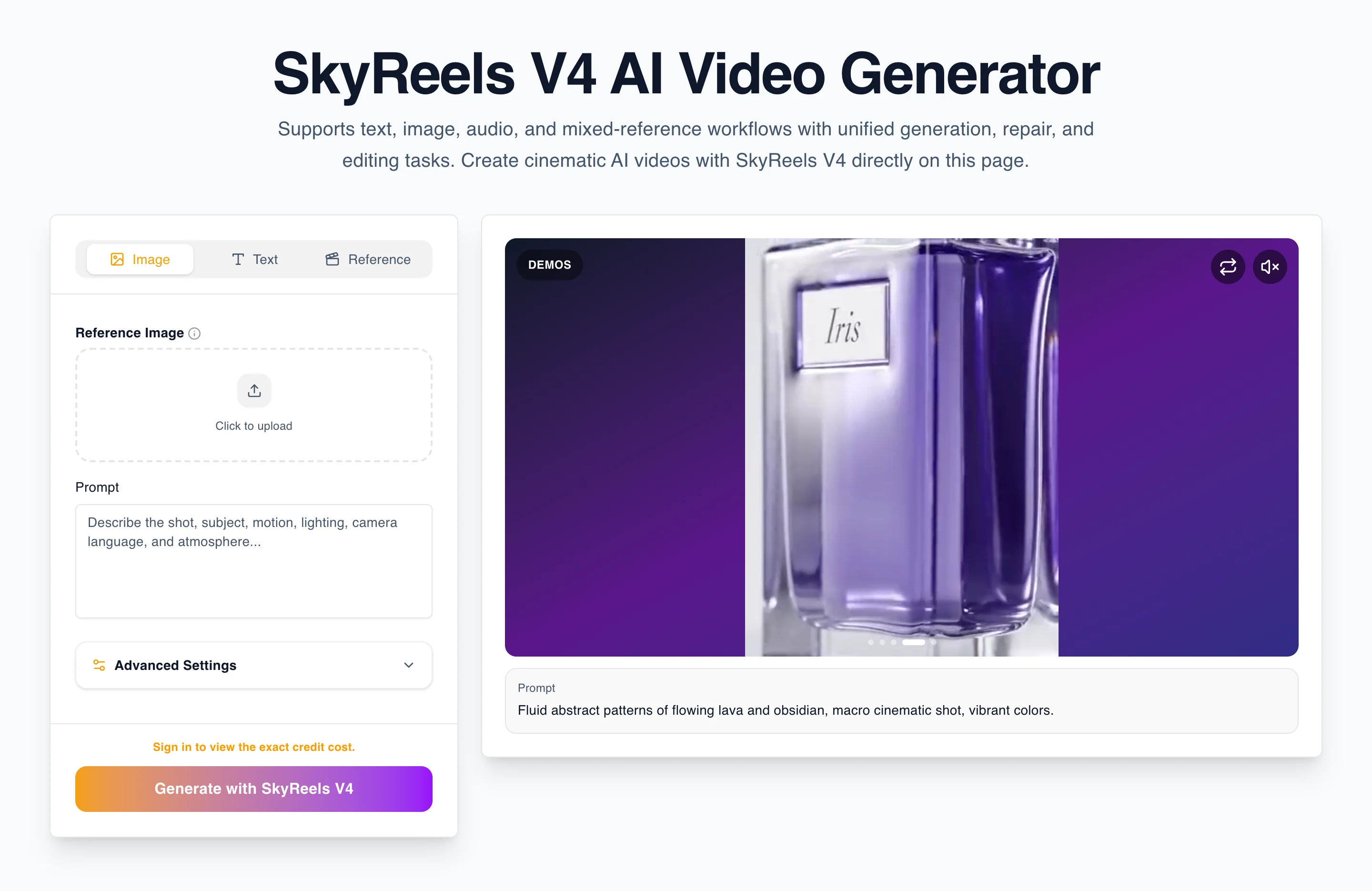

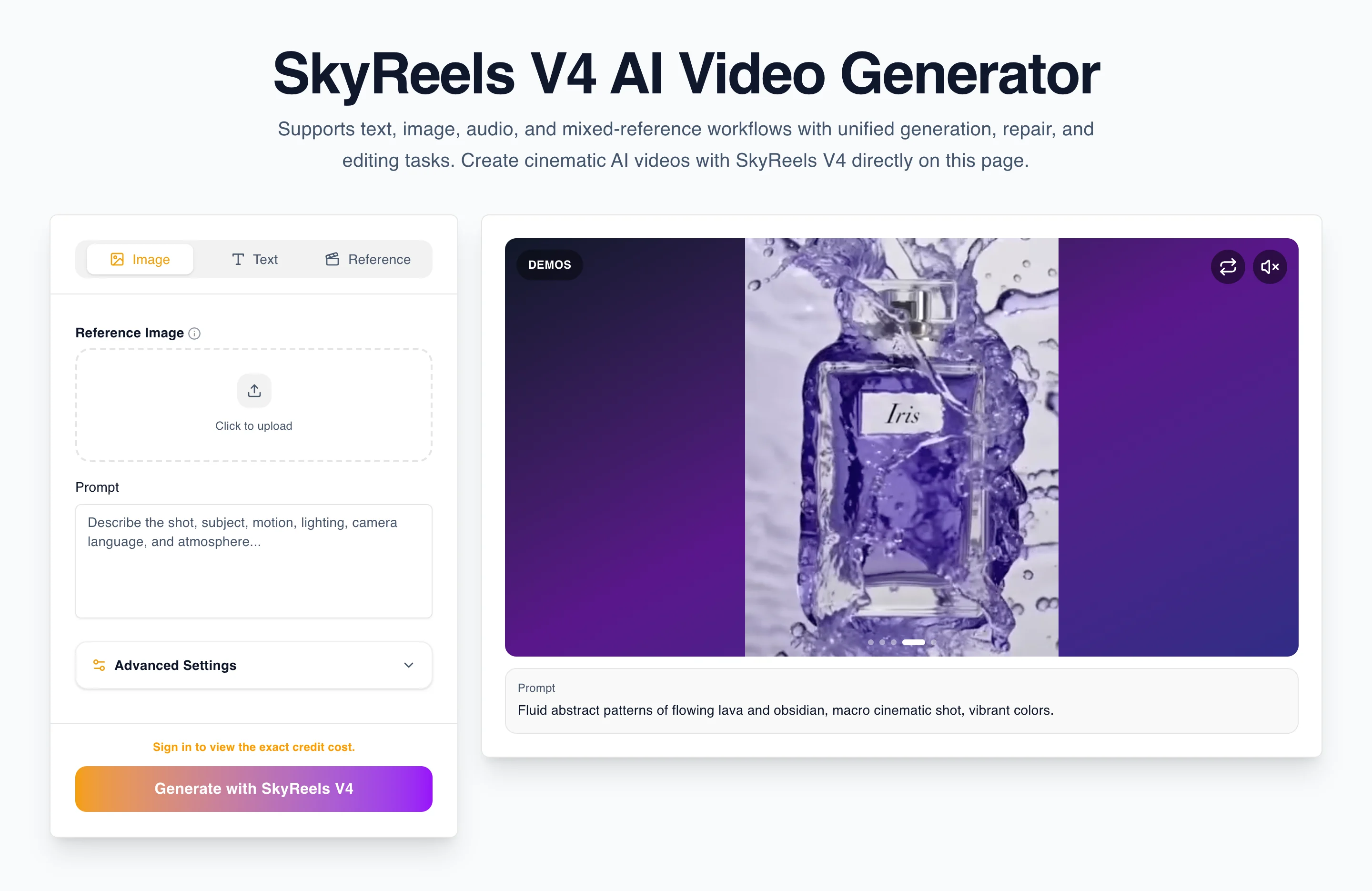

The current workflow, as exposed by the site

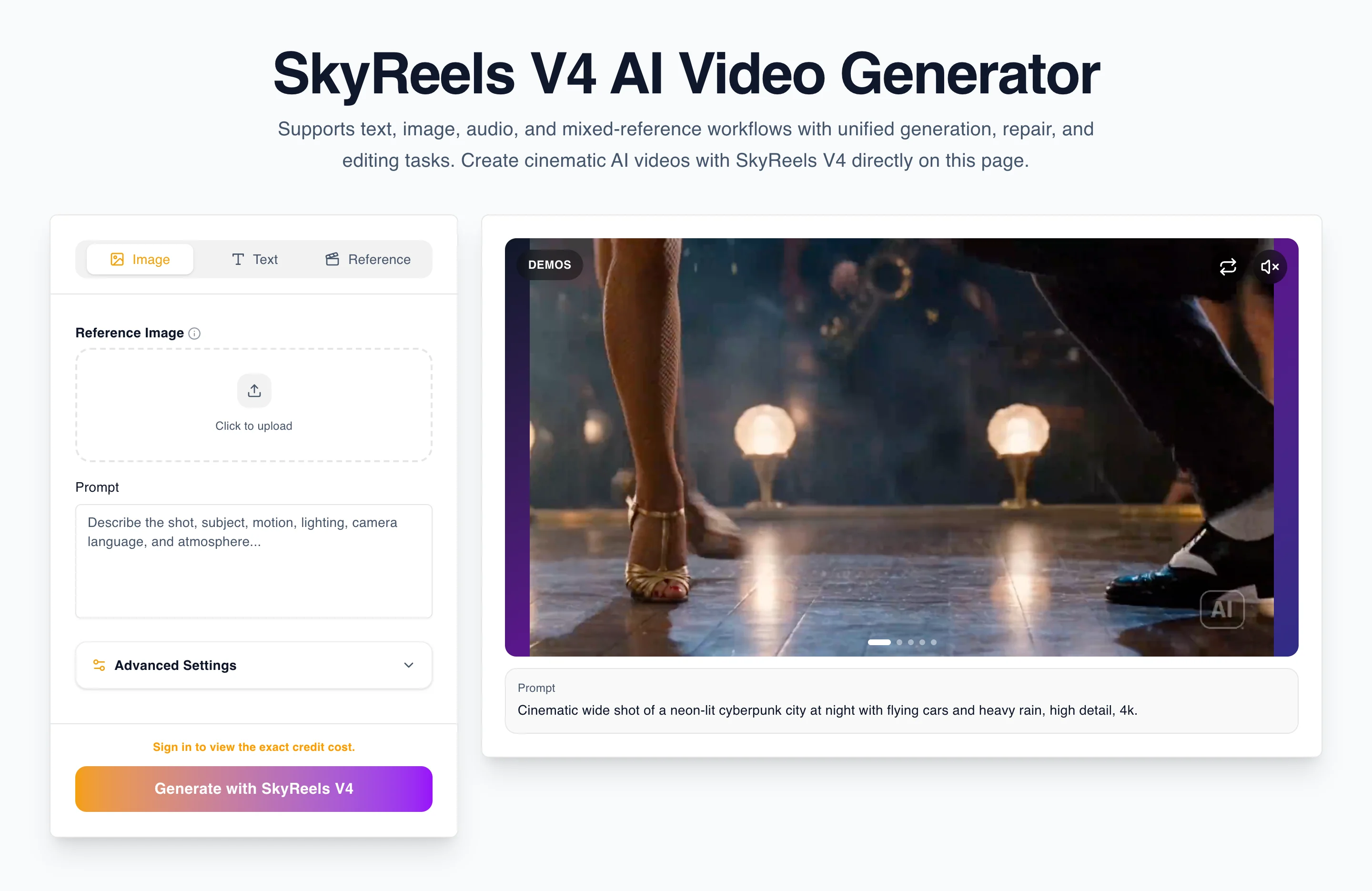

Based on the current implementation, SkyReels V4 is presented as a generator that supports:

- text-to-video

- image-to-video

- reference-based generation

- prompt-driven control over output

- a path for multimodal creative direction

That is already more useful than a homepage-only pitch. On the product page, the generator sits directly inside the hero workflow, which is the right choice. Users searching for a specific model page like /skyreels-v4 often want immediate interaction. They do not want to scroll past a dozen explanatory sections before discovering whether the tool is even usable.

The input structure also matters. A review reader is paying attention to whether the product treats prompting as a real workflow or as a toy. The existence of multiple modes, file uploads, prompt fields, negative prompt support, and configuration panels suggests that SkyReels V4 is being shaped as a more controlled generation experience. That supports the product narrative well.

What is still missing from the site is hard evidence of output quality across repeated runs. A serious review reader will want to know:

- whether image references actually improve consistency

- whether camera instructions are followed reliably

- whether audio guidance makes a visible difference

- whether output quality remains stable over multiple attempts

- whether the interface encourages efficient iteration or just retries

Those questions define whether SkyReels V4 feels like a structured creative tool or a promising label wrapped around a familiar backend pattern.

Where SkyReels V4 looks strong

1. The product framing is sharper than the average AI tool launch

Many AI video sites still rely on generic language like “create cinematic video in seconds.” That copy is broad enough to say almost nothing. SkyReels V4 is stronger because it consistently points back to a more specific frame: multimodal input, joint audio-video generation, and unified generation-repair-editing tasks.

That matters because it gives the site a real thesis. A product with a thesis is easier to rank and easier to sell. Users can remember it. Writers can build content around it. Comparison pages can explain it. Reviews can test it. Blog content can support it without repeating the homepage.

From a review perspective, that is one of the best signals on the site.

2. The page architecture respects search intent

This is another strong SkyReels V4 point. The current SkyReels V4 project already separates key search intents into dedicated destinations:

/skyreels-v4for SkyReels V4 product intent/skyreels-v3for SkyReels V3 intent/skyreels-v4-prompt-guidefor SkyReels V4 tutorial intent/skyreels-v4-reviewfor SkyReels V4 review intent/skyreels-v4-vs-sorafor SkyReels V4 comparison intent/pricingfor SkyReels V4 commercial intent/blogfor supporting long-tail SkyReels V4 content

This is exactly the kind of SkyReels V4 structure that makes a site easier to expand without turning every page into a keyword dump. It also improves internal SkyReels V4 link logic. A good blog post can send users to the SkyReels V4 review. A review can send users to SkyReels V4 pricing. A comparison page can send users back to the main SkyReels V4 product page. That kind of routing supports both user flow and authority flow for SkyReels V4.

3. The generator is embedded where users expect it

For model-specific product pages, generator placement is critical. Hiding the real tool too far down the page creates friction. Putting it into the hero area makes the page feel like an actual product surface rather than a static sales page.

That is important for review readers because it signals seriousness. Even before a user runs a generation, they can judge whether the workflow feels intentional. SkyReels V4 already benefits from this.

4. The site has room for a clear funnel

The current page map suggests a usable intent funnel:

- homepage introduces the product universe

- product page explains the model

- guide teaches how to prompt

- review evaluates quality and workflow

- comparison handles alternatives

- pricing captures purchase intent

From a buying-confidence perspective, this is much stronger than a site that only has a homepage and a pricing page.

Where SkyReels V4 still needs proof

1. The strongest claims are not yet matched by enough evidence

This is the biggest gap. The stronger the promise, the higher the proof requirement. If SkyReels V4 is going to be positioned around unified generation, repair, and editing, then the site needs to show more than text descriptions of those ideas.

A review page should eventually contain:

- side-by-side prompt and output examples

- notes on what changed after a second generation

- examples of reference-guided consistency

- examples of failure cases and how to correct them

- a clearer explanation of when V4 beats V3 in practice

Without this, the product still looks promising, but not yet fully proven.

2. The review currently depends too much on positioning logic

A good AI review should not be only a copy critique. It should include product behavior. Right now, the structure is correct, but the evidence layer still needs to be expanded. That means future revisions of this review should document at least one repeatable test scenario.

For example:

- same subject, different camera language

- same subject with and without image reference

- one scene attempted in V3 and V4

- one prompt written loosely and then rewritten using the guide framework

- one example where the output succeeds and one where it breaks down

That kind of material does not just help users. It also makes the page harder to replace in search results because it becomes grounded in actual product use.

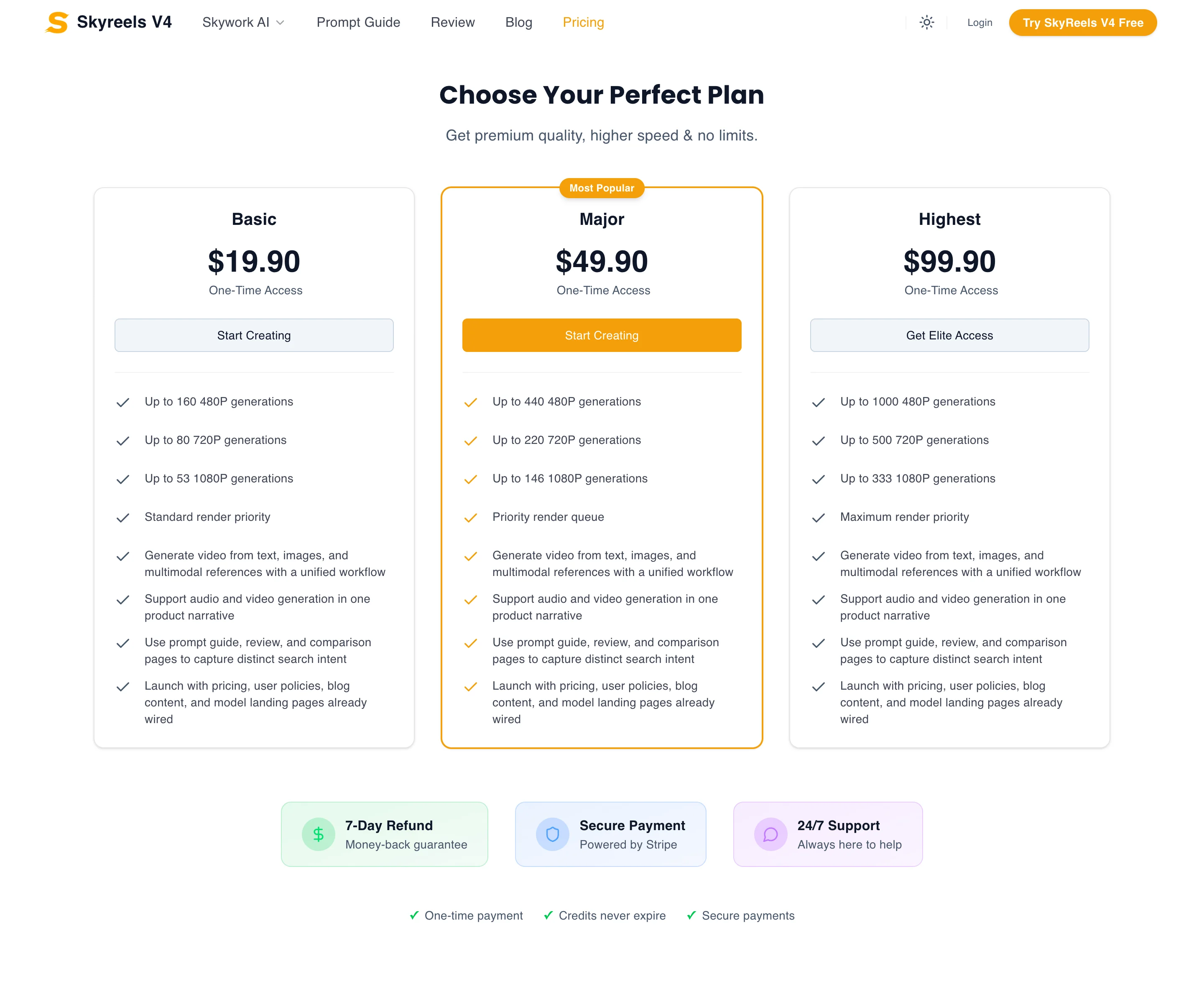

3. Pricing trust still depends on workflow economics

The listed plans are clear enough, but review readers do not decide based on price labels alone. They decide based on whether the workflow is efficient enough to justify repeated usage. If a tool needs too many retries, even a low headline price can feel expensive. If the workflow reliably gets users close to a usable result, a higher plan can still feel worth it.

That is why a review page should always connect quality and cost. It is not enough to say “the pricing is transparent.” The real question is whether the user gets enough control, consistency, and revision efficiency per paid session.

The current pricing page is the correct destination for plan selection, but the review should prepare the reader for that decision by explaining what kind of user is likely to feel satisfied with the workflow.

A more practical workflow assessment

For solo creators

SkyReels V4 looks best for creators who need a structured interface and who care about controllable outputs more than raw novelty. Someone making concept videos, product teasers, short motion experiments, or mood-driven social clips may value a workflow that supports text, image, and reference reasoning more than a one-click viral demo.

For this user group, the key value questions are:

- Can I get closer to the intended shot with fewer retries?

- Can I keep a character or visual identity more consistent?

- Can I turn one rough idea into several workable variants?

- Does the product help me iterate instead of just regenerate?

If SkyReels V4 can prove those points through examples, it becomes much more compelling for solo users.

For marketing teams

Marketing teams usually care less about artistic novelty and more about repeatability. They want predictable workflows for ad creatives, short promotional sequences, landing-page visuals, and campaign experiments. A multimodal system is attractive if it reduces the time spent explaining the same creative direction over and over.

This is where SkyReels V4 has a strong theoretical pitch. If multimodal references actually reduce ambiguity, then creative teams can spend less time fighting consistency issues. But again, this is where the product needs evidence. A review page for this audience should eventually show whether:

- reference images help preserve style across variations

- scene direction remains stable when prompts are adjusted

- outputs can be refined rather than rebuilt from scratch

- the workflow is clear enough for non-specialists

For agencies and in-house creative teams

This audience will judge the product more harshly. They will immediately ask whether the site is describing a real workflow or just marketing a future roadmap. Their expectations are higher because they think in terms of delivery, revisions, and client-facing consistency.

For these users, a promising signal is the product narrative itself. A weak signal is the current lack of hard comparison evidence. They will want to know whether the “generation, repair, and editing” promise leads to lower revision cost or simply better messaging.

That makes the review page strategically important. It has to become the place where this audience sees evidence, not just claims.

SkyReels V4 vs thinner AI tool sites

One of the best things about the current SkyReels V4 setup is that it does not feel like a random single-page clone with a pricing block attached. The site already has a more deliberate structure than many AI tool launches:

- dedicated product pages

- a separate review route

- a dedicated prompt guide

- a comparison route

- blog support for long-tail SEO

That means the site can build topical authority over time instead of relying on homepage luck. Many AI tool sites fail because they try to rank one page for every keyword cluster. SkyReels V4 is better positioned than that.

Still, structural quality is not the same as trust. Trust comes from content quality, documented workflow evidence, and honest evaluation.

The review criteria that matter most for buyers

When I strip away the branding and the launch narrative, the real buyer questions look like this:

1. Does it help me get to a usable output faster?

This is the most important question. If the multimodal workflow reduces ambiguity and failed attempts, that is a real advantage. If it only increases the number of controls without increasing useful precision, then the complexity may not be worth it.

2. Does it make comparison against alternatives easier?

A good site should not hide from alternatives. The existence of the SkyReels V4 vs Sora page is a positive signal. It tells users that the site is willing to situate itself inside a broader AI video market.

3. Does the content help me self-qualify?

Strong AI tool sites help users decide whether they are the right user. That means:

- product page for “what is this?”

- guide for “how do I use this?”

- review for “is it actually good?”

- pricing for “is it worth paying for?”

SkyReels V4 is already close to this pattern. That is a meaningful strength.

4. Does the review feel honest about gaps?

This matters more than most teams realize. A review page that only praises the product is less credible. Users trust pages that acknowledge what still needs proof. In the case of SkyReels V4, the current honest position is:

- the strategic framing is strong

- the site architecture is good

- the workflow surface is promising

- the evidence layer still needs to catch up

That is a credible assessment, and credibility itself is a conversion asset.

Suggested screenshots and proof assets this page still needs

To turn this from a solid strategic review into a strong ranking page, I would add:

Screenshot set A: generator workflow

- generator hero above the fold

- prompt panel with image mode active

- reference mode showing multiple file inputs

- current mode indicator and preview panel

Screenshot set B: testing sequence

- first generation attempt from a broad prompt

- revised prompt after adding camera language and identity constraints

- output comparison notes

Screenshot set C: multimodal control

- image reference upload state

- optional audio input state

- resulting preview output

Screenshot set D: conversion context

- pricing section

- internal links from review to product and guide

- comparison page excerpt

Those are not decorative additions. They are what will make the review feel like a real product evaluation page.

Who should try SkyReels V4 now, and who should wait

The best early users are people who are comfortable evaluating a promising workflow before every proof layer is complete. That usually includes:

- solo creators who like testing new generation workflows

- marketers who want to explore multimodal prompting as a creative advantage

- teams that already understand AI video tools and can tolerate some experimentation

These users can get value from the current product direction because the site already exposes a meaningful workflow and a clear intent structure. They can use the product page to test the core model, the prompt guide to tighten prompting logic, and pricing to decide how much experimentation budget makes sense.

The users who may want to wait are those who need immediate proof of reliability before spending serious time or budget. That includes:

- agency teams with client deadlines

- operators who need repeatable output without much manual refinement

- buyers who compare tools mainly through documented test results

For this group, the current strategic framing is promising, but the missing screenshots, repeatable examples, and before-and-after evidence still matter. Once those assets are added, this review will become far more persuasive for skeptical evaluators.

Final verdict

SkyReels V4 is currently stronger as a product concept and site architecture than as a fully proven review target. That is not a dismissal. In fact, it is one of the more promising positions an AI video site can be in at this stage.

Why?

- The product story is clearer than average.

- The workflow framing is more serious than average.

- The page structure respects intent.

- The internal link architecture is already moving in the right direction.

Why not give it a fully bullish verdict yet?

- The review still needs real workflow proof.

- The product claims are ambitious enough that screenshots, tests, and examples are mandatory.

- The strongest differentiators need concrete evidence to convert skeptical users.

So the practical conclusion is this:

SkyReels V4 looks promising, credible, and structurally well positioned, but it still needs a deeper evidence layer before this SkyReels V4 review can become a truly high-conviction page.

If you want the direct product surface, go back to SkyReels V4. If you want to improve SkyReels V4 prompting, use the SkyReels V4 prompt guide. If you want to compare SkyReels V4 alternatives, read SkyReels V4 vs Sora. If you are deciding whether the SkyReels V4 workflow is worth paying for, open pricing. And if you want more context before converting, use the supporting SkyReels V4 blog as the next step in the journey.