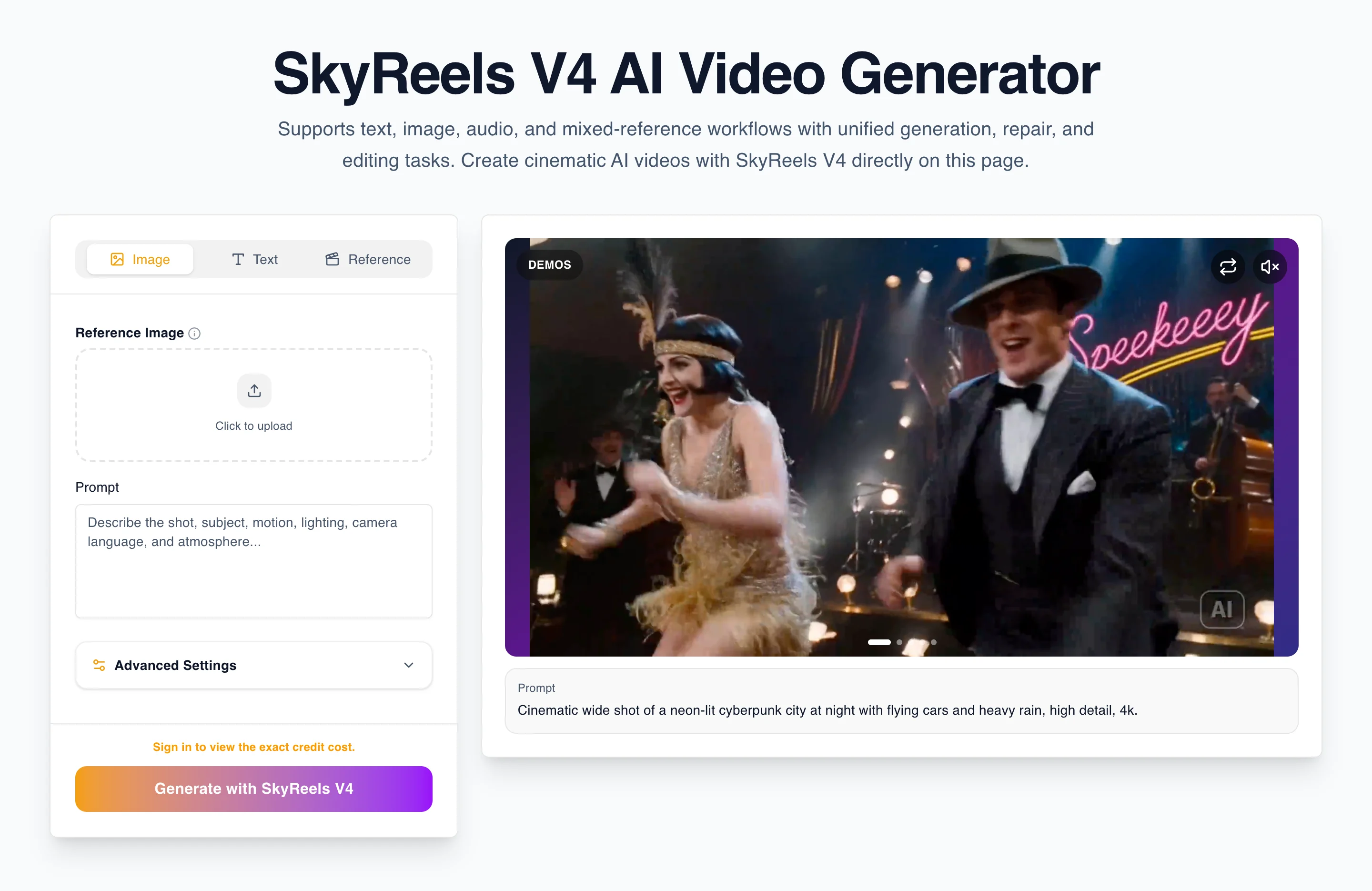

SkyReels V4 enters the market with a positioning that is different from the standard "text in, video out" story. The core narrative is that SkyReels V4 is a video foundation model built for multimodal input, joint audio and video generation, and a broader workflow that includes generation, repair, and editing tasks. That framing matters because user intent around AI video tools has already matured. People are no longer searching only for a flashy demo. They are looking for systems they can understand, evaluate, and buy with confidence.

This first-look article is not the same thing as the SkyReels V4 review. The review page is where evaluation intent belongs. It is where readers should expect detailed judgment, strengths, weaknesses, and buying guidance. This article is more foundational. It answers a different question: why does the multimodal framing around SkyReels V4 matter in the first place, and why should creators or operators care?

That distinction matters for both user experience and SEO. A user searching for "SkyReels V4 first look" or "what is SkyReels V4" usually wants orientation before they want a verdict. They need a conceptual map. They want to know what kind of product this is, how it is being framed, and why it may deserve a deeper test. If this page tries to become a review, a tutorial, and a pricing pitch all at once, it stops serving that intent well.

The first reason SkyReels V4 matters: the story is workflow-first, not gimmick-first

Many AI video pages still sell novelty. They show a clip, promise magic, and assume the user will infer the workflow later. That approach may work for social posts or quick landing pages, but it usually creates a problem once the reader asks practical questions:

- How do I keep results more consistent?

- Can I use image references?

- Is the tool only for one-shot generation?

- What happens when I need to fix a weak clip?

- How do I decide whether the product is worth testing seriously?

SkyReels V4 has a more useful story because it can be described as a workflow system rather than only a model demo. Terms like multimodal input, joint audio and video generation, and unified generation, repair, and editing tasks give the product a stronger operational identity. That does not automatically prove technical superiority, but it gives the product a much better conceptual framework.

And conceptual frameworks matter. They shape how users test the product, how publishers write about it, how internal stakeholders evaluate it, and how a site routes readers toward the next relevant page.

Why the phrase "video foundation model" matters

The phrase "video foundation model" is doing more than sounding advanced. It suggests that the product is not limited to a single narrow task. It suggests a broader underlying capability that can support multiple modes of input and multiple downstream actions. For readers, that changes the expectation from:

- "Can this make a cool clip?"

to:

- "Can this become part of a repeatable video workflow?"

That is a much more commercially useful question.

Creators do not only need novelty. Teams do not only need a hero clip. They need a system that can survive real use:

- testing multiple prompts

- refining identity

- adjusting camera behavior

- fixing weak generations

- moving from concept to usable asset

When a model is framed as supporting both creation and correction, it becomes easier to justify deeper evaluation.

Why multimodal input is more than a feature checklist

At first glance, "multimodal input" can sound like a generic platform claim. But it matters because it changes how instructions are distributed.

Traditional prompting often overburdens text. A user tries to encode:

- subject identity

- clothing

- composition

- lighting

- motion

- camera behavior

- atmosphere

- pacing

inside one sentence or paragraph.

That creates ambiguity fast. Text alone can carry a lot, but not always cleanly. A multimodal workflow makes it easier to separate responsibilities:

- text can define action and scene logic

- images can define subject identity or style

- audio can define rhythm or mood context

- editing or repair logic can refine the output after the first pass

That does not mean multimodal input magically solves every generation problem. It means the system can be approached more like a production workflow and less like a slot machine.

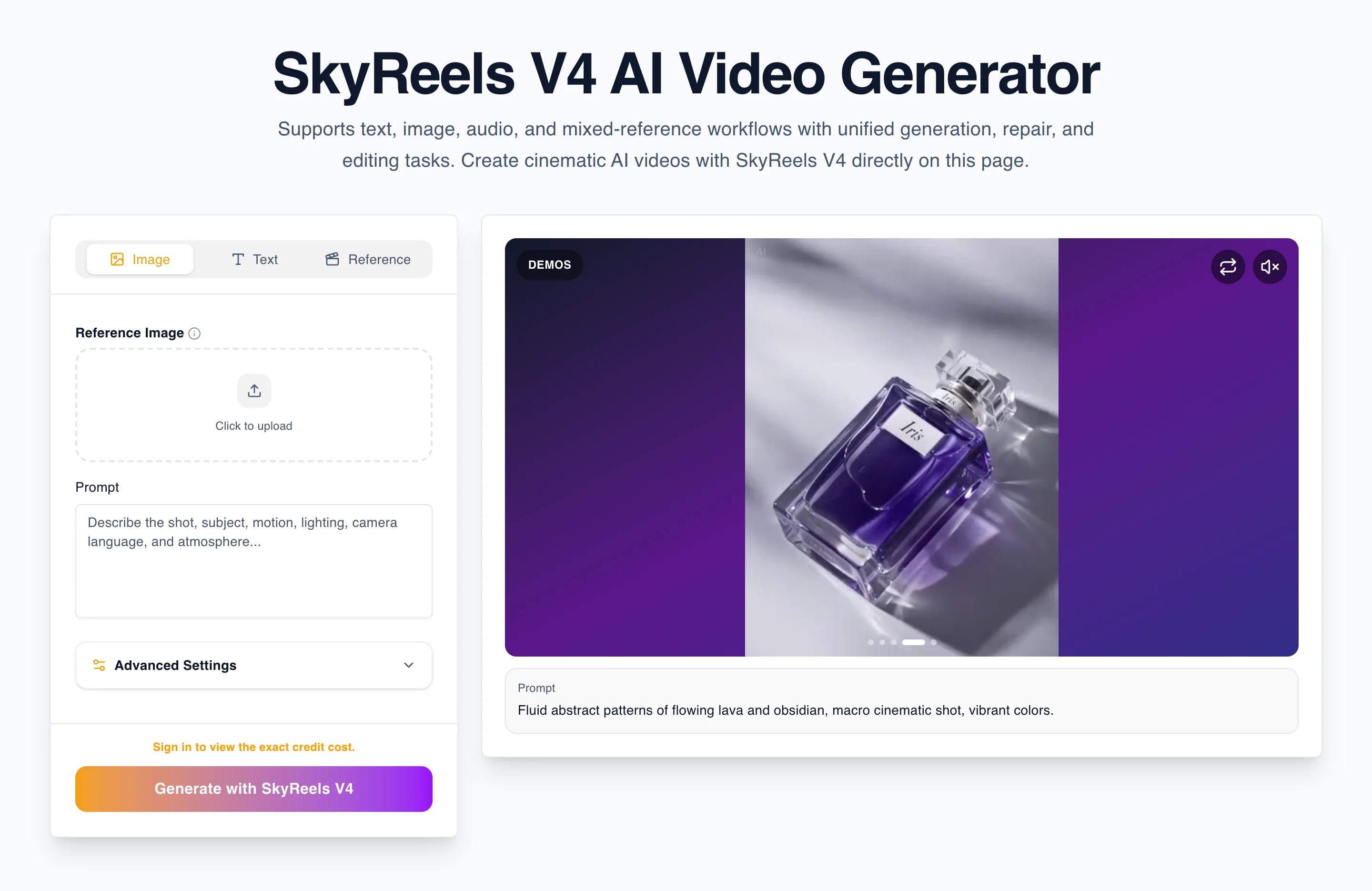

Why this matters for creators

For creators, multimodal framing matters because consistency is usually the first real problem after the novelty phase.

A creator can get one beautiful output from many tools. The harder question is whether they can get:

- another output with similar identity

- another shot in the same atmosphere

- another clip that still fits the same campaign or sequence

- a revised shot without restarting the entire process

That is where SkyReels V4's positioning becomes meaningful. If the product story is centered on unified generation, repair, and editing, then the buyer expectation is not just "show me one good frame." It becomes "show me a workflow I can continue using."

That is especially important for:

- short-form video creators

- product marketers

- creative agencies

- affiliate publishers building demo and review content

- operators testing whether an AI video tool can fit inside a repeatable funnel

Why this matters for site structure and search intent

One of the reasons SkyReels V4 is a strong content opportunity is that the product naturally supports multiple intent pages without forcing them to overlap.

The product page can do the job of model intent.

The prompt guide can do the job of tutorial intent.

The review can do the job of evaluation intent.

The comparison page can do the job of decision intent.

And pricing can do the job of commercial intent.

That matters because a lot of AI tool sites fail by trying to make one page carry every query cluster. The result is weak intent matching, weak internal linking, and pages that sound repetitive instead of helpful.

SkyReels V4 has a better opportunity because the product story is broad enough to justify multiple real destinations.

A first-look buyer should care about one thing most: clarity of next steps

When people arrive at a new AI product, they often do not need every answer immediately. What they need is confidence that the site knows where to send them next.

If a user is curious, the product page should orient them.

If they want proof, the review page should take over.

If they want to learn usage, the guide page should help them.

If they want to compare alternatives, the comparison page should frame the decision.

If they want to spend money, pricing should be easy to inspect.

This kind of routing is not an afterthought. It is part of what makes a product feel usable before the user even signs up.

What makes SkyReels V4 strategically interesting right now

There are three reasons this product is strategically interesting even before the site is fully mature.

1. The product thesis is specific

It is not framed as "another AI video generator." It is framed around multimodal workflow, audio-video coordination, and generation plus repair plus editing. That is a stronger thesis than generic hype language.

2. The site can map search intent cleanly

You can build a homepage, product pages, guide, review, comparison page, pricing page, and blog posts that do distinct jobs. That is good for both users and SEO.

3. The brand can borrow technical familiarity while staying commercially distinct

Even if some parts of the underlying generation path currently align with interfaces similar to Wan 2.5 or Wan 2.6, the site-level product packaging can still be distinct. Users do not evaluate only the backend. They evaluate the experience, the workflow framing, the documentation, the buying journey, and the clarity of the offer.

What a "first look" should honestly say

A useful first-look article should not overclaim. It should say:

- here is why the positioning is interesting

- here is what the product story is trying to solve

- here is why multimodal framing matters

- here is what kinds of users may care

- here is what still needs deeper proof

That last part is important. A first-look article gains trust when it admits what it cannot settle yet.

At this stage, a first-look page can persuasively say that SkyReels V4 has:

- a stronger workflow story than many generic AI video pages

- a site architecture that can support clear intent routing

- a useful narrative around multimodal generation and editing

But it should not pretend that this alone replaces real testing. That is what the review page and future screenshot-backed case studies are for.

A practical example of why multimodal framing changes the user mindset

Imagine two user journeys.

In the first journey, the user sees an AI video tool that says:

Enter a prompt and generate a clip.

That is simple, but it also encourages the user to throw everything into text.

In the second journey, the user sees a product framed around:

- prompt

- image reference

- audio context

- generation

- repair

- editing

That second framing encourages a more modular mental model. The user stops asking "what magical sentence should I write?" and starts asking:

- what should text control?

- what should the image reference control?

- what should be refined later instead of forced now?

That is a much better foundation for stable creative work.

Why internal linking is part of the product story

For AI products, internal links are not just navigation. They are workflow guidance.

This article should naturally point readers to:

- SkyReels V4 if they still need the product overview

- SkyReels V4 prompt guide if they want to learn how to operate the workflow

- SkyReels V4 review if they want a real evaluation

- SkyReels V4 vs Sora if they are already comparing alternatives

- pricing if they are close to a buying decision

That means the blog becomes part of the product system. It is not just publishing for publishing's sake. It is a routing layer that helps users move to the page that best answers their next question.

Who should pay attention to SkyReels V4 first?

Creators exploring multimodal prompting

If your main problem is prompt ambiguity and consistency, SkyReels V4 is worth paying attention to because the product story is already built around a richer control model than generic text-only generation pages.

Affiliate publishers and SEO operators

If you are building a content cluster around a new AI tool, SkyReels V4 is attractive because the intent structure is naturally clean. You can separate review, guide, comparison, and pricing without stuffing all that intent into one page.

Marketing and content teams

If your team needs repeatable outputs rather than occasional wow moments, a workflow-first AI video product is easier to test and easier to explain internally.

Agencies and evaluators

If you need to decide whether a tool belongs in a broader production process, SkyReels V4 is interesting because its site and messaging already create room for a more serious evaluation framework.

What still needs to happen for this product story to fully land

The next step is not more slogans. It is proof.

To fully support the multimodal and workflow-centered story, the site should continue adding:

- screenshot-backed guide sections

- review sections with test criteria

- before-and-after prompt revisions

- identity consistency examples

- comparison tables against benchmark tools

- pricing explanations that map to real usage patterns

That is how a strong first-look story becomes a durable content system.

Final take

The strongest first-look framing for SkyReels V4 is not "here is another AI video model." It is "here is a multimodal AI video product designed to unify generation, repair, and editing tasks, supported by a site structure that gives every major search intent its own destination."

That makes the product easier to understand, easier to publish about, and easier to improve over time.

If you are new to the product, the best next step is to read the SkyReels V4 product page. If you want to understand real usage and buying fit, go straight to the review page. If your next question is operational, read the prompt guide. And if you are already deciding whether this is the right AI video tool for your stack, compare it directly in SkyReels V4 vs Sora.